Rémy Duthu

How I Work With Parallel Agents

· 5 min read

For years, I optimized for flow. Long, uninterrupted blocks where I could hold an entire problem in my head, move from A to B without touching C or D. I read the deep work literature, silenced notifications, closed Slack. It worked.

Coding agents changed the equation. Not because they replaced the thinking, but because they introduced idle time in the middle of it. When you ask Claude Code to implement a feature, you don’t sit there watching it write files and run tests. You could, but it would be a waste. The agent might run autonomously for hours, and during that window you have a choice: do something else with your hands, or do something else with another agent.

Ethan Swan recently argued that context switching between multiple AI sessions is becoming a core engineering skill, that it’s “about to become table stakes.” Addy Osmani takes a more cautious view, sticking to one coding agent at a time and treating the AI as a pair programmer that needs constant direction. I’ve landed somewhere between the two. Running agents in parallel is genuinely productive, but there’s a ceiling, and it’s lower than I’d expect.

How I work with agents

I typically run at most two main agents in parallel. Main meaning agents working on substantial features, not quick chores. Each gets its own git worktree so they don’t step on each other’s files, and I keep the primary repository checkout free for my own manual work. I wrote a small git-worktree-init script that scaffolds two worktrees ({repo}-1 and {repo}-2) inside a .worktrees/ directory at the repo root, each on its own branch. A global .gitignore keeps those directories out of version control.

In my iTerm2 setup, the first two tabs are Claude Code sessions and the rest are mine. To keep track of what each agent is doing at a glance, I use Claude Code hooks to color each tab based on the agent’s state. Adding even one more agent beyond two noticeably increases the cognitive load for me. Not linearly, but in a way that feels closer to what managers describe when they talk about the cost of each additional direct report.

Delegating the main development work to agents doesn’t mean I stop writing code. I still enjoy it, and there are things I want to learn by doing rather than delegating. What changes is that I get to choose. I can pick up a small technical task on the side, design future systems, review more PRs, or I can step back entirely and spend that time on things that usually get squeezed out: reading technical material, studying a new tool, thinking about architecture. The agents handle the bulk of the implementation and I get space for the higher level work.

The key to making this work for me, and to not spending the whole day babysitting, is front-loading the planning. I use Claude Code’s Plan Mode extensively before letting an agent start writing code. My Claude Code settings default to plan mode so every session starts with planning, not coding. A good plan means I can trust the agent to run autonomously for a long stretch, which is what buys me time to focus on other things. I also ask agents to split their work into minimal, individually deployable commits, each one safe to ship on its own. This aligns well with how we work at Mergify, where every commit is pushed as its own PR using mergify stack and deployed independently.

Planning often starts before I even open Claude Code. I use Linear for issue tracking, and with the Linear MCP server connected, I can tell an agent “write a plan to tackle MRGFY-1234” and it pulls in the issue context, understands the scope, and proposes an implementation strategy. Linear also supports delegating issues directly to coding agents, which closes the loop even further.

On our codebase, we’ve invested in making the agent as autonomous as possible. We documented conventions, testing commands, and dangerous patterns to avoid. We’ve also written custom skills that the agent can invoke to run database migrations safely, query production data, migrating between test frameworks, etc. The Claude Code permissions are tuned to pre-approve safe operations (git add, git commit, grep, mergify, etc.) while still requiring confirmation for git push. The result is that the agent can write code, run tests, generate SQL migrations, compare SQL optimisations, build OpenAPI schemas, and push a stack of commits, all without asking me anything.

What changes downstream

Once the agent finishes, it pushes its work and I get a set of PRs to review. I review them on GitHub alongside my colleagues, and alongside other automated reviewers like GitHub Copilot code review. When reviewers leave comments, I can point Claude Code at the PR and ask it to address all open threads. We wrote a small internal skill to streamline that step.

This workflow produces more PRs than a traditional one, which has shifted where the bottleneck sits. Review throughput has become a real constraint. We’re still figuring out the right approach. One thing we’ve tried at Mergify is a “low-impact” label that reviewers can apply to acknowledge that a PR is safe enough to merge with a single approval rather than the usual two. It’s a small process change, but it reflects a broader tension: agent-assisted development generates more incremental, well-scoped changes, and the review process hasn’t fully adapted to that cadence yet. We’ll keep iterating on this.

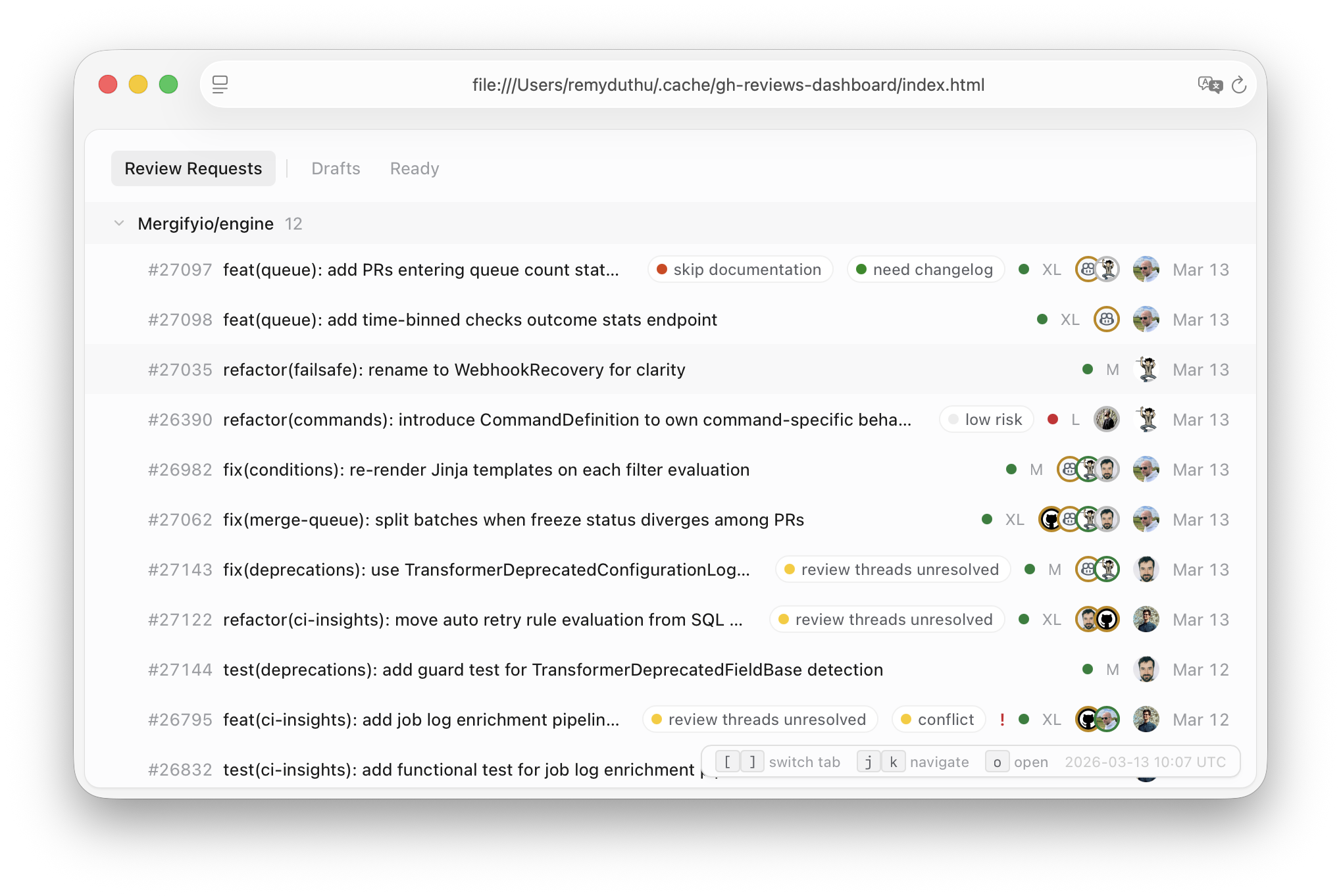

To stay on top of the review load, I asked Claude Code to build two GitHub CLI extensions: gh-reviews, which lists open PRs requesting my review directly in the terminal, and gh-reviews-dashboard, which generates an HTML dashboard with CI status, reviewer avatars, and keyboard navigation.

I don’t think this way of working is for everyone, or for every task, and it’ll probably still change a lot in the future. Some problems still reward, maybe even require, sustained single-threaded focus. But for the kind of feature work and maintenance that makes up the bulk of a product engineer’s week, learning to manage agents in parallel has been a meaningful shift for me. The skill isn’t prompting anymore. It’s more orchestrating.